While an "XML-in XML-out single pipe" approach has several advantages that make it a good choice for a core library, it is not the way most of us like to code. We don't want to create and parse XML every time we make a call. So we should write something to do that for us. Our ideal representation of a function call depends on where we are working.

In Excel it is functions in an XLL, registered with the application, consuming XLOPERs and integrated nicely with the environment (for example, the "Help on this function" link in the Insert Function dialogue). In C++ it is a function in a header ready for us to include in our program, whose arguments and return values are C++ types. If we are driving an end-user application, then we probably want to be presented with an appropriate collection of GUI widgets that represent the function call.

At first sight, supporting a strongly-typed language like C++ is a bit of a stretch for an underlying API that works with arrays of variants (of numbers, strings, Booleans). However, there is nothing to stop us specifying a type for each input argument and obtaining a corresponding C++ object on the inside of the library during the function call.

For example, if a calibration function consumes the string "MyOptimizer" from Part 4 it can retrieve the corresponding object from the map of name to (smart pointer to) object described in Part 3. The type of that input is not "string", but "optimizer". Or it might allow the construction of such an object on the fly given a tolerance and number of iterations. For an example of such an approach in C++, see this paper.

Anyway, in all of the use-cases described above, given a call_function-style API, the goal is to map from bespoke constructs native to the calling environment to a correctly assembled XML function call (and back from the XML result). We have isolated all the pieces that are specific to a given calling environment away from the common parts which are presented in the core library.

Now, in the case of the GUI, it helps a lot if the library is self-describing. The technical term for this is reflection and is a standard part of some languages (Python for example), but not often seen in analytics libraries. It should be though, for two main reasons:

- the vast majority of the code needed to implement higher-level APIs, whether code or GUI based, can be generated by machines,

- this code-generation can be done post-build, which means that users of the library can do it themselves.

This second point is of particular value to software vendors, with partner relationships.

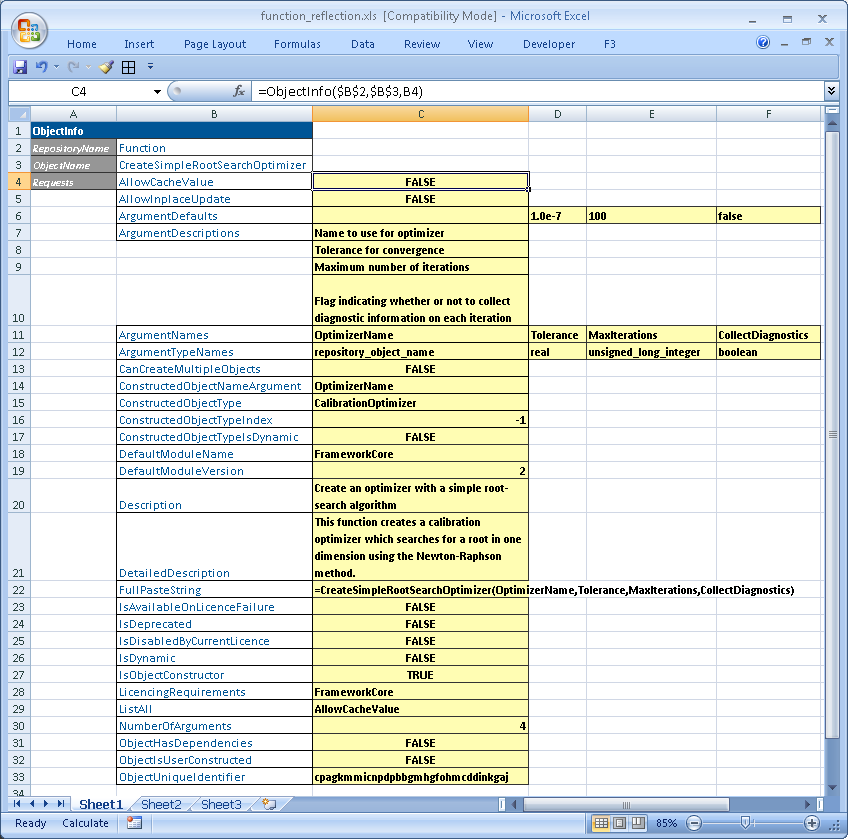

Reflection works on objects such as MyOptimizer, but also on functions themselves. Here is an example reflecting the properties of the function shown in Part 3:

Reflection of a function's properties. In this implementation, ObjectInfo is the driving function for all reflection. It consumes the name and type of the object being queried, plus a list of requests for information. In a strongly-typed interface like that shown in this paper, the requests manifest as members of the Function class.