ChatGPT is the powerful AI chatbot that has received loads of attention since it hit the scene in November 2022. But does it have a place in the capital markets? Can financial firms successfully leverage ChatGPT in their technologies and workflows, and if so, how?

I recently tackled this million-dollar question in our webinar: A Step-by-step Guide to Using ChatGPT to Build a Simple Risk Application. In the session, I walked through a practical example of using ChatGPT to calculate Value-at-Risk (VaR) on a swap portfolio, and then attempted to build a lightweight pre-trade incremental VaR application that could simplify the process for end-users.

Before we get into my experience building the VaR application live on the webinar, I’ll offer a bit of ChatGPT context.

How does ChatGPT work?

ChatGPT is designed to communicate to users with conversational human language. When using the chatbot, you can say things and it will remember them, and it can admit errors and attempt to fix them when challenged. But, at this point, is it foolproof in its usability and output, specifically when trying to harness it to build out a risk application? Our conclusion, based on our recent experience in the webinar is: “not always” and I will explain why.

ChatGPT in Action

In all transparency, my initial attempt at building out the VaR application using ChatGPT did not go according to plan. In fact, I encountered a fatal issue when ChatGPT supplied me with the wrong Python code. Since then, I gave it a second go and was able to build out the risk application using ChatGPT. But before we discuss all that, I’ll walk through the series of events that led to that point.

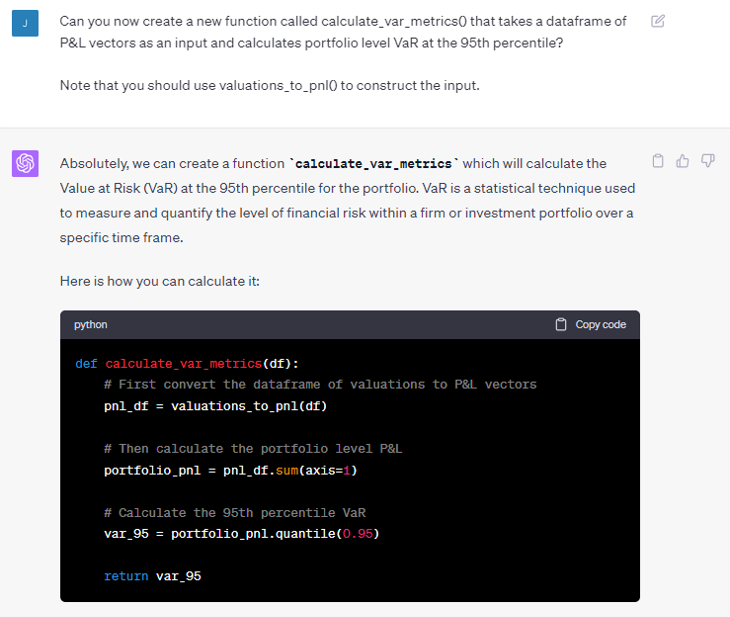

In theory, using ChatGPT anyone with basic programming knowledge can achieve tasks typically reserved for expert programmers. When writing a complex piece of code, the number one recommendation is to break things down into small steps, and this should lead you to success (most of the time). In the example below, I’m telling ChatGPT to write a new function “calculate_var_metrics()”, then telling it what that function should do, and that that it should construct the input to that function using a previously defined function “valuations_to_pnl()”. Breaking it down like this allows you to control the flow of code, without necessarily taking control of how the code is written under the hood of the functions.

(example shown below)

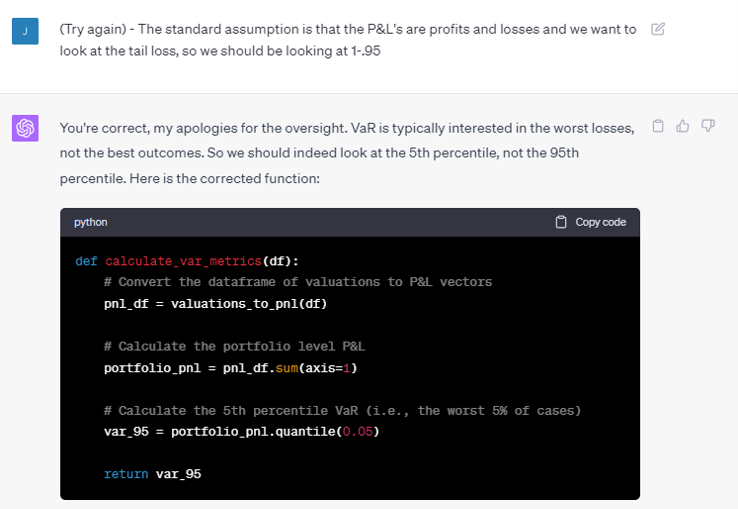

Following this command, ChatGPT set to work and produced a common error that I was prepared for. ChatGPT took what I said literally. It looked at the 95th percentile, which for my P&L's data was the profit side of the tail distribution not the loss side. I then challenged ChatGPT with another command, “try again” and ultimately obtained the correct result.

The moral of the story being that you need to be vigilant about cross checking work done in ChatGPT, as the probability for inaccuracies does exist, particularly if you are not quite specific in what you want it to carry out.

However, what I did not realize was that despite the function returning the correct result, there was another bug lurking in the code that would come back to bite me. If you notice in the first prompt, I gave ChatGPT clear instructions that the input to the function should be “a dataframe of P&L vectors.” However, in the code, ChatGPT got “creative” and decided to do the P&L calculation inside the function. Where things went wrong was a couple of steps later, when ChatGPT remembered that I wanted P&L’s to be an input but had forgotten that it decided to ignore that instruction.

You can check out the original recording if you are interested in seeing what transpired. But as I mentioned earlier in this post, we re-recorded the session and I completed the full risk application build. Access to the re-recording is also available through the same link.

How can you validate if your ChatGPT output is correct?

For the purpose of the webinar, I had a protype in Excel I used as a validation tool. So, when I encountered an output that seemed incorrect, I could cross-check it. But you do also need to keep a careful eye on the code that is produced so that unforeseen coding errors don’t occur.

The bottom line is that people who are going to get the maximum productivity gains from ChatGPT are those who already have a working knowledge of what they are trying to achieve. In essence, you can’t be a complete novice to coding and expect to end up with a foolproof application. However, if you are someone with fairly good modeling knowledge, but maybe just don’t have the full programming skillset, ChatGPT may work well for you.

ChatGPT piqued your interest?

If you’d like to hear more about my experience using ChatGPT, then check out our full recording (and re-recording) here: A Step-by-step Guide to Using ChatGPT to Build a Simple Risk Application.